Introduction to Responsible AI

Models in today’s world have a real, tangible, and sometimes life-changing impact on the lives of real people, bringing to light an important new side...

Solutions

Products

Ingest omnichannel customer interactions, from audio and screens to surveys, for complete visibility and analytics.

AI virtual agents, real-time multilingual translation, and event-based customer feedback drive smarter, personalized CX initiatives.

Customers

Solutions

Products

Customers

Resources

Company

A large part of what makes Responsible AI difficult is the vast set of ideas, theories, and practices that it interacts with. As with any budding field, Responsible AI is developing its own vocabulary, sampling terms from both the computer science and social science worlds. Keeping up with the changing terminology that appears in the ever-expanding body of research can be overwhelming. In this series of posts, we’ll begin to unpack some of the many terms that define this space. We don’t claim to have a perfect answer or a complete list of terms, but we hope that this series can serve as a starting point for understanding and further research.

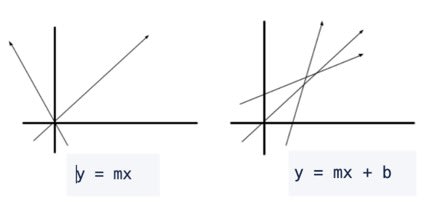

Much of the foundation of Responsible AI revolves around the process of finding and mitigating bias within AI systems. This goal is extremely important, but it seems difficult to pin down what that goal is really supposed to mean. Part of that difficulty stems from the ambiguity around the word bias itself. A dictionary definition would define bias as “an inclination of temperament or outlook, especially: a personal or sometimes unreasoned judgement,” and is likely similar to the working definition that most of us keep in our brains. However, many industries put their own spin on what “bias” means. These spins create the nuanced understanding that is needed for understanding bias in Responsible AI. Here's an outline that begins to explore just a few of these related definitions.

Given the definitions of bias above, it can be challenging to see how machine learning bias could have a direct social impact. In the case of a wolf/husky classifier, predicting that something is a wolf three-fourths of the time might not cause any harm, especially if the application is just helping wildlife aficionados identify what they’re seeing in the wild. If, however, you have a hiring model that predicts that 98% of the people hired should be male identifying, there are real societal implications that impact the lives of real people. This is the key to the complexity of understanding bias in Responsible AI: all of the definitions above have important implications when it comes to building Responsible AI models.

We have yet to build a single concise definition of bias that encapsulates all of the details and nuances of the term. The CallMiner Research Lab has worked to develop a list of key take-aways from all of the definitions. Like with everything in the Responsible AI world, these definitions are flexible, and we’ll continue to update and change them as the field grows.

Bias is not innately harmful. it’s what we do (or don’t do) with it that can cause harm. More on this in a future blog post!

Biased data can potentially reflect a world that doesn’t exist. Training sets that are too specific will cause the model to perform badly. For example, if I take a handful of coins from a jar, and those coins only include pennies, a model will assume that quarters, nickels and dimes don’t exist. A data sample that has a distribution that is different from the real world will show statistical bias when presented with unseen test data, which leads to poor performance and creates a greater possibility of causing harm.

Sometimes biased data reflects the world that does exist. Sometimes, data bias comes from an inherently unequal state of our world that might be caused by something totally unrelated to your project. We saw this in the news, when hiring algorithms favored resumes that included baseball over softball in industries that were predominately male. It’s still important to critically analyze these situations – while it may be necessary to preserve these differences, it’s absolutely crucial that you come to that decision through critical analysis and potentially difficult discussions with a diverse group.

Everyone carries biases. From the people who create a project to the annotators who annotate data, to the model builders to the end users, everyone carries their lived experience into their perception of a model. Someone who’s bilingual might be able to help design an annotation task that is more inclusive of non-native English speakers. People from traditionally marginalized groups might see bias in a model that someone who has privilege in the case that the model is predicting might not notice. It is crucial that we are mindful of the decisions we make and the biases we carry in every aspect of the job, and that we have a diverse team of creators to help broaden our perspective. Even more than having the diverse team, we must make sure to listen to each other and to take everyone’s opinion into account. Open, constructive dialogue will help us all build better projects in the long run.

Bias in machine learning will always exist, because it’s inherently fundamental to the algorithms and outcomes we expect. When it comes to taking bias out of machine learning, the most important thing we can do is pay attention. Pay attention to the ways in which our model underperforms. Pay attention to the predictions that we make and how those predictions are used to inform real-world decision making. Pay attention to our colleagues, users, and others when they point out ways in which our models might be creating harm – and then do something about it.

CallMiner is the global leader in AI-powered conversation intelligence and customer experience (CX) automation. Our platform captures and analyzes 100% of omnichannel customer interactions delivering the insights organizations need to improve CX, enhance agent performance, and drive automation at scale. By combining advanced AI, industry-leading analytics, and real-time conversation intelligence, we empower organizations to uncover customer needs, optimize processes, and automate workflows and interactions. The result: higher customer satisfaction, reduced operational costs, and faster, data-driven decisions. Trusted by leading brands in technology, media & telecom, retail, manufacturing, financial services, healthcare, and travel & hospitality, we help organizations transform customer insights into action.